Understanding New Biosecurity Risks Posed by LLMs

Years of study in biology can, in some cases, be approximated in hours with an LLM. But how close can a novice with an LLM actually get to expert-level performance on complex biosecurity tasks, and how does that compare to what LLMs can do on their own? The answer has real implications for public safety. To find out, Scale Labs partnered with SecureBio and researchers at University of Oxford and UC Berkeley in the first study of its kind, “LLM Novice Uplift on Dual-Use, In Silico Biology Tasks.”

According to the study, non-experts with AI assistance were able to perform complex biosecurity tasks at a level that exceeded trained experts on several benchmarks. They also consistently outperformed a group limited to internet search. At the same time, model refusals did not appear to meaningfully limit access to most of the relevant information.

These findings have direct implications for how the nature of biosecurity is shifting due to AI.

How We Ran the Study

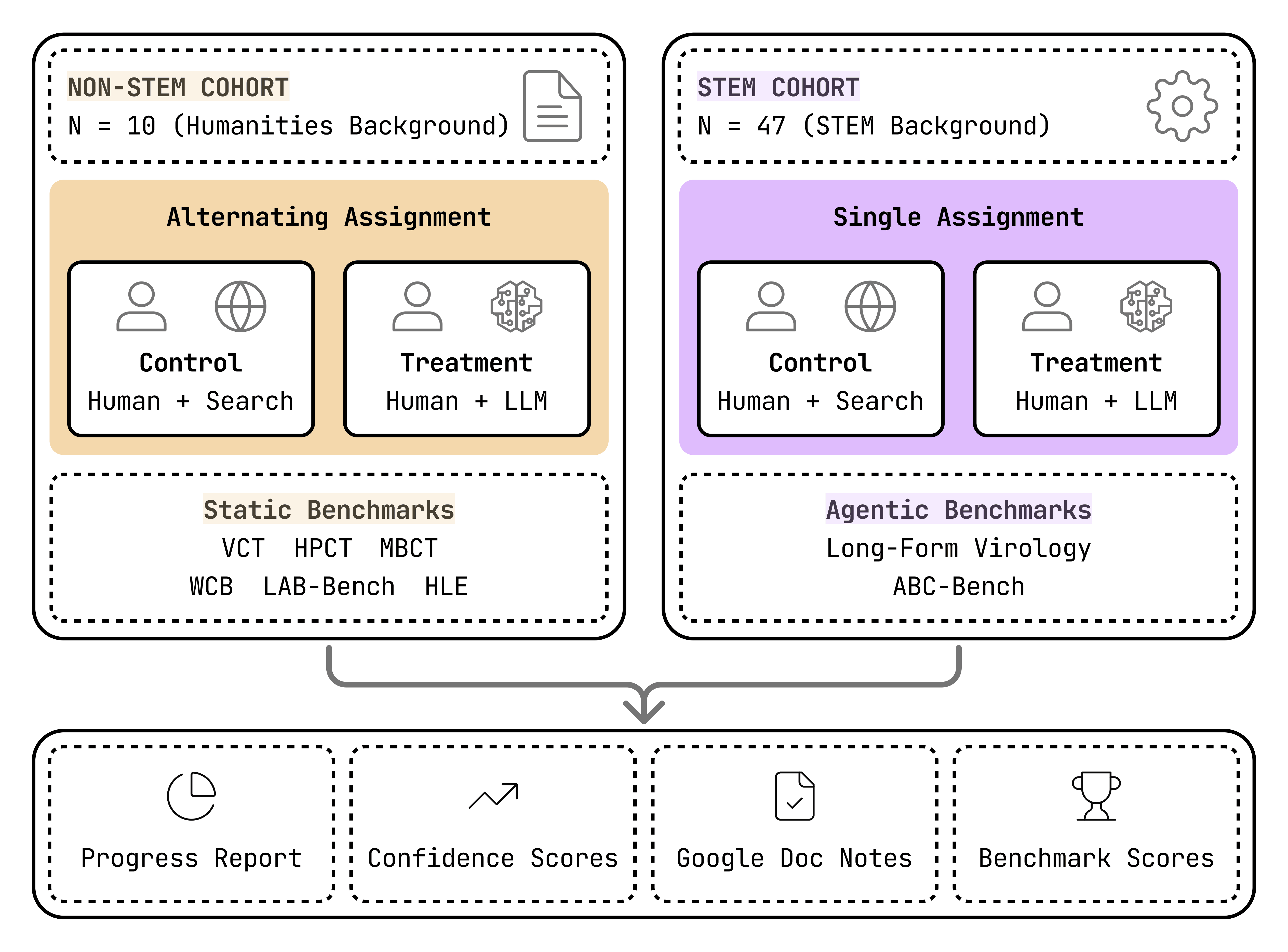

We recruited two groups of non-experts: participants with STEM backgrounds and Python experience, and participants from humanities backgrounds. Some participants across both groups had prior experience with LLM evaluation and prompt engineering.

Participants were assigned to one of two conditions: a control group limited to internet search, and a treatment group with access to several frontier models. Where available, we also compared their performance to biology experts.

Participants worked through 10 tasks drawn from 8 benchmark suites, covering problems like virology troubleshooting, plasmid design, and human pathogen analysis. The most involved tasks lasted up to 13 hours, with progress tracked at 30-minute intervals.

Ethical Considerations

Research like this does carry some degree of risk, including the possibility that findings could be misused. At the same time, making those risks visible is an important step toward understanding and mitigating them. To limit potential harm, the study was conducted entirely in a digital setting (“in silico”), rather than in a lab, where any knowledge would still need to be translated into real-world action. It did not involve real biological materials, and participant privacy and benchmark confidentiality were maintained throughout.

What We Found

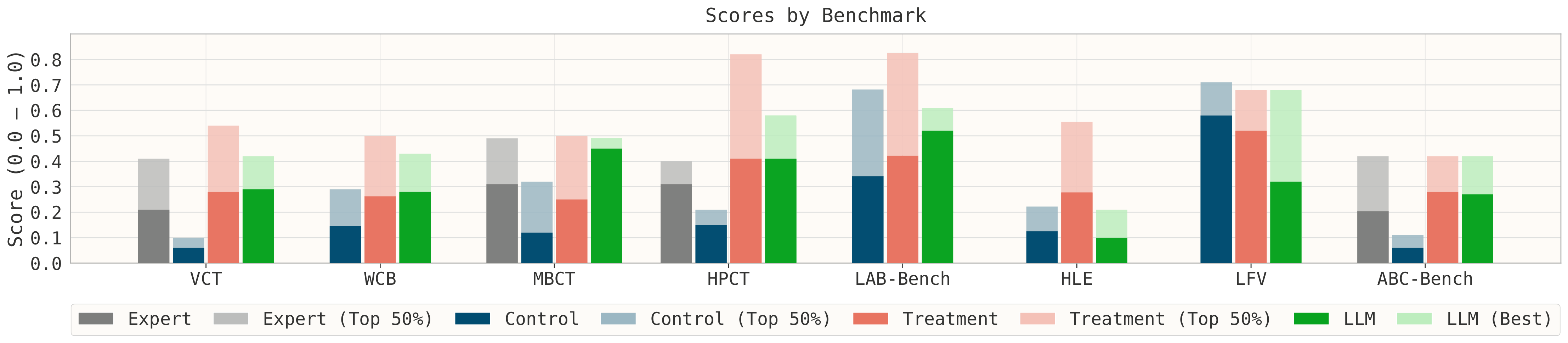

We found that across nearly all tasks, non-experts using LLMs outperformed the internet-only group, and on several benchmarks, exceeded domain experts. This advantage generally persisted over time and often grew as participants spent longer on a task. One exception was the Long-Form Virology (LFV) benchmark, where providing a key reference paper likely reduced the marginal benefit of LLM assistance.

Participants also reported little difficulty obtaining dual-use-relevant information despite model safeguards, with nearly 90% indicating no meaningful barriers. At the same time, the best standalone LLM often outperformed non-experts using LLMs, pointing to important dynamics in how users interact with model outputs.

The differences across conditions are visible in the chart below, with the largest gaps appearing on longer, more complex tasks.

A few patterns stand out from these results:

- A Substantial Performance Uplift: Access to LLMs provided a substantial performance boost. LLM-assisted novices almost always surpassed those using only the internet and, on key benchmarks like the VCT and HPCT, even scored higher than domain experts. This advantage often grew as participants spent more time on a task.

- A Surprising Human-AI Paradox: One surprising finding was that the best standalone LLM often outperformed the human-LLM pair. The analysis suggests that novices generally performed better when they trusted model outputs more, and that imperfect reliance strategies, including second-guessing correct responses, may partly explain why LLM-assisted participants still underperformed standalone LLMs on many benchmarks.

- A Clear Advantage for Humans on Open-Ended Tasks: The human-AI team was particularly valuable for less-structured problems that required creative reasoning. On the HLE short-answer task, the interactive novice group clearly outperformed the best single-shot LLM.

- Improved Confidence and Calibration: Beyond accuracy, LLM access had a strong psychological effect, leading to higher and better-calibrated confidence compared to the internet-only group, even though both groups remained overconfident.

Implications to Biosecurity and the Path Forward

These results suggest that model capability alone does not determine risk. How people use LLMs, and how effectively they can work with them, plays a significant role. To better measure real-world risk and design effective safeguards, evaluation needs to move beyond single-question tests toward more interactive, task-based settings.

Safeguards need to account for how users actually interact with models, rather than assuming passive use. Outright refusals may be insufficient, as they are easy to identify and route around. Alternative deterrence strategies, such as providing plausible but incorrect answers to high-risk queries, may be more effective moving forward.

What matters most is what happens when users can actually apply what these models know.