You wouldn't let a new hire unilaterally make a decision that could alter your company's future on their first day, no matter how brilliant they are. Onboarding takes time: time to absorb institutional knowledge, understand organizational nuances, and earn trust.

The same logic applies to Enterprise AI. Frontier models are more capable than ever, but the more AI can do, the harder it becomes to trust. The bottleneck to enterprise adoption isn't model intelligence, it's context. AI systems today cannot internalize the institutional judgment, risk tolerance, and decision-making intuition that differentiate one organization from another.

Most enterprise systems capture data and transactions, but not how experts actually decide what to do. That judgment, which is the institutional knowledge in your employees’ heads, is your most valuable intellectual property. And right now, it's unprotected. It walks out the door when people leave. It leaks to model builders when you feed it into general-purpose AI systems. And your competitors are trying to capture theirs first.

Companies today struggle to answer one question: how do we set our operations up to take advantage of the inevitable breakthroughs in model performance?

The enterprises that win won't be the ones with the most powerful models. They'll be the ones who capture their own organizational judgment and turn it into a compounding asset that makes their AI smarter, more trustworthy, and uniquely theirs.

That is the gap your Dialect closes.

Every Enterprise has a Dialect. SGP Helps You Develop Yours

In language, a dialect is a distinct variety characterized by unique vocabulary, grammar, and pronunciation specific to a particular population and culture. Similarly, every enterprise possesses its own "dialect" — a unique corpus of workflows, decision-making logic, and tribal knowledge that defines how its experts actually reason and act.

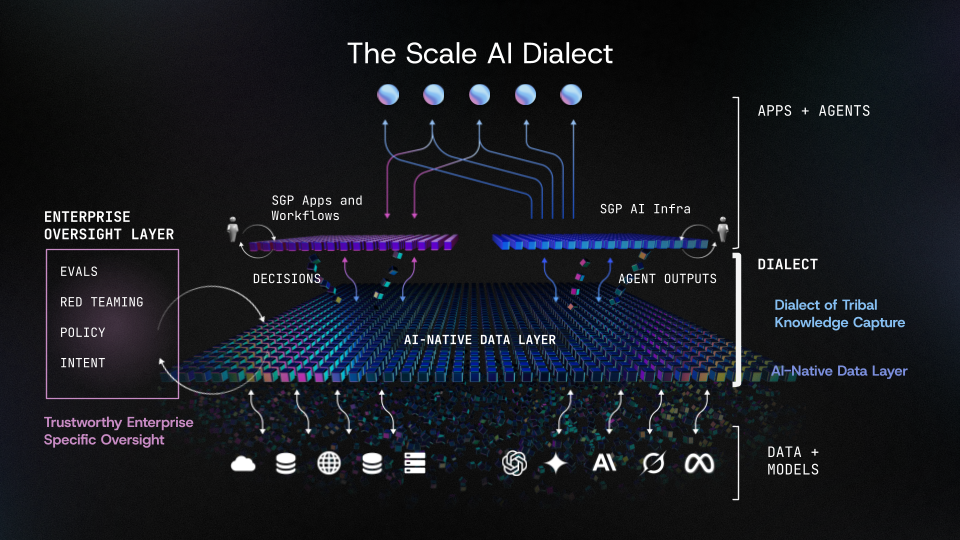

Dialect is a self-improving intelligence layer that captures how your experts decide and turns that reasoning into living systems that get smarter over time. It is a core capability of Scale GenAI Platform (SGP), Scale's end-to-end infrastructure for building, deploying, and improving enterprise AI agents. Dialect in SGP is how you capture, encode, and operationalize yours.

Dialect is not a separate product or a new application. It emerges from four capabilities built into SGP: push-button agent infrastructure, a machine-readable data layer, enterprise-specific oversight, and encoded institutional knowledge. Together, these give every AI agent access to a shared, structured understanding of your enterprise; its rules, standards, and judgment, so agents don't have to infer meaning from fragmented context.

SGP is headless, agnostic, and interoperable. It lives where your data lives, connects to your existing tools and models, and requires no massive migration or provider switch.

Moving from data modeling to decision modeling

Traditional enterprise AI approaches focus on operationalizing data: creating semantic models of the world by mapping entities, attributes, and relationships. They build a digital twin of operational reality: what exists, how things connect, and their current state.

Dialect takes a fundamentally different approach.

Instead of modeling the world, Dialect models how experts decide. It captures expert reasoning chains, organizational decision standards, and the rules, relationships, and methodologies that experts actually use when making calls on hard problems. Where data-centric approaches require ongoing engineering effort to maintain and evolve entity models, Dialect emerges organically through repeated expert interaction and improves automatically through evaluation loops and feedback.

This distinction matters because enterprises don't win by organizing the same data as everyone else. They win by cultivating what is uniquely theirs: their internal logic, decision standards, and tribal knowledge that compounds over time. Dialect is designed to capture that advantage and make it usable by AI.

The Three Pillars of Dialect

1. AI-Native Data Layer: From Silos to Connected Semantic Systems

Before agents can act, they need an environment they can understand. Most data systems today are still structured for human consumption, and AI explores data in a fundamentally different way. Dialect begins by transforming distributed enterprise information stored in structured systems, unstructured documents, and multimodal files into a connected system that preserves meaning, relationships, and provenance. This gives agents a stable world model to reason over, not brittle context stitched together one prompt at a time.

Example: A claims agent processing a complex prior authorization needs to connect a provider's clinical notes (unstructured text), a patient's claims history (structured data), and current medical necessity guidelines (policy documents). Dialect understands how these pieces relate to one another, rather than treating them as disconnected documents dropped into a context window.

2. A Living Dialect of Tribal Knowledge: From Static AI to Continual Enterprise Learning

Most enterprise advantages live in judgment: how work gets done, what exceptions matter, and what exceptional work looks like in practice. Dialect turns expert behavior into enterprise learning by capturing feedback from real usage like edits, approvals, overrides, and outcomes, and converts that learning into two compounding assets.

The first is domain-aware memory that can be retrieved in-context, so the system draws on the full history of how decisions have been made in this organization. The second is model improvement through reinforcement learning that aligns agent behavior to enterprise standards over time. Every correction an expert makes teaches the system what "good" looks like here, not in the abstract, but in this organization, for this workflow, under these constraints.

Critically, experts do more than label outputs as 'good' or 'bad.' They encode the context by referencing specific documents, explaining their reasoning, and providing full data lineage for why they made a call. That context is captured, preserved, and built upon. When those experts eventually leave the organization, their judgment doesn't leave with them. It remains as an institutional asset that compounds long after the workforce changes over.

Example: A cybersecurity review agent scanning software against a compliance checklist flags two internal policies that actually conflict with one another. Normally, the software being scanned would simply fail the audit, and the team would have to start over. But with Dialect, the agent surfaces the conflict to the human decision-maker, who resolves it: "Use this policy over that one going forward." That resolution is encoded into the system. The next time this type of conflict arises, the agent automatically applies the correct policy and the audit runs faster.

3. Reliable Enterprise Evals: From Generic Benchmarks to Enterprise-Specific Oversight

Production AI requires trust, and Dialect makes trust measurable. Through enterprise-specific evaluation (domain rubrics, calibrated auto-raters, and continuous monitoring), Dialect validates agent behavior end-to-end. This transforms evaluation from a one-time check into ongoing oversight, detecting drift, preventing regressions, and giving risk and compliance teams the confidence to approve deployments.

Evaluation doesn't just check the final output. It verifies whether the agent used the right sources, applied the correct reasoning, followed internal escalation or approval rules, and made appropriate decisions about when to act autonomously versus when to escalate to a human.

Example: An enterprise can continuously verify whether an agent processing insurance claims is applying the correct medical necessity guidelines, citing approved sources, and escalating edge cases to human reviewers — not just that it produced a reasonable-looking output.

Together, the three pillars form the enterprise intelligence loop: Data → Learning → Oversight. This foundation makes agents deployable today and makes them stronger with every cycle of real usage.

How Dialect Emerges: The Self-Improving Loop

Dialect is not configured in a one-time setup. It emerges through repeated expert interaction inside an intentionally designed loop. The loop is anchored to four technical primitives:

- Evaluations

Evaluations define "good" through expert grading against organizational standards. Domain experts evaluate agent outputs against calibrated rubrics, creating a ground truth for what quality means in this specific enterprise context. - Autonomous Agent Optimization

VeRO (Versioning, Rewards, and Observation) auto-improves prompts, tools, and policies against those evals without requiring Scale engineers on-site. This means the system gets better autonomously as it accumulates more expert feedback, continuously optimizing agent behavior against the enterprise's own definition of success. - Knowledge Store

Knowledge Store captures decision logic so that rules, relationships, and methodologies become machine-executable. This is where tribal knowledge is transformed from tacit expertise into structured, retrievable, auditable logic that agents can reason over at scale. - Enterprise Decision Record

EDR produces evidence so that every output has an auditable trace of what data was used, what reasoning was applied, and why a particular decision was made. This is essential for regulated industries and any environment where accountability matters.

Dialect Enables Decision Making that Compounds

In the near term, your dialect produces decision artifacts: reports, recommendations, and next-best-action integrations that plug directly into existing workflows. Over time, as the system accumulates more expert feedback and organizational context, outputs trend toward increasingly autonomous execution, so you can build agents that take actions you trust.

But the real value is what builds underneath. The eval suite, the knowledge store, and the system configuration compound over time, retaining expert judgment even as teams change, preserving institutional memory even as people move on, and creating deep organizational adaptation that cannot be replicated overnight.

Model Portability

One of a Dialect's most important technical properties is model independence. Today, if an enterprise AI system is tightly coupled to a specific model, upgrading or switching models means retraining from scratch. Once developed, Dialect decouples institutional knowledge from any single model. Because the judgment, history, and context are captured in the system rather than baked into model weights, organizations can adopt new frontier models as they're released without starting from zero. They build on the foundation they already have. What’s more, this ensures your enterprise IP stays within your organization rather than leaking to the model builders.

Highly regulated, Security-sensitive environments benefit the most from Dialect

Many of the organizations that stand to benefit most from Dialect operate in highly regulated, security-sensitive environments. They solve hard, critical problems and that means they're not always in a commercial cloud. They can be in air-gapped networks, on-premise environments, or completely disconnected infrastructure. Dialect is built to be deployed where customers actually are.

What's Next

Scale has been at the frontier of every major step change in the AI industry, from generating the training data that powered the frontier models to building the evaluation infrastructure that measures their capabilities. Dialect represents the next step change: moving from AI that can generate answers to AI that can make decisions enterprises trust enough to act on.

To get started, please reach out to our team to schedule a conversation here.

Ready to break through your data bottleneck?

Scale's team will match your project to the right experts, fast.