Voice models are fast improving and benchmarks are having a hard time keeping up. Most voice benchmarks don’t use real human speech. Very few are multilingual. And voice quality – whether a model understood what you said, gave you a good answer, and sounded natural doing it – is inherently subjective. The result is that models ship without clarity into how they actually perform for real people, in real conversations, across multiple languages.

Today we’re launching Voice Showdown, the first preference arena for voice AI models — and the first voice benchmark built entirely on real human speech from a global user base. It draws on real people speaking naturally to a voice assistant, with their accents, background noise, half-finished sentences, and everyday questions, occasionally getting a blind comparison between two models. Rankings are determined by which model people actually prefer, across 60+ languages and 11 frontier models.

Voice Showdown brings voice to Showdown, our arena for evaluating AI models through real-world usage which has collected over 29 million prompts from more than 300,000 users globally. These comparisons happen on ChatLab, our model-agnostic chat platform where users interact with multiple frontier models in real conversations. ChatLab has been live for Scale's global community of contributors, and we're opening up the waitlist to the public today.

Check out the leaderboard here.

How Voice Showdown works

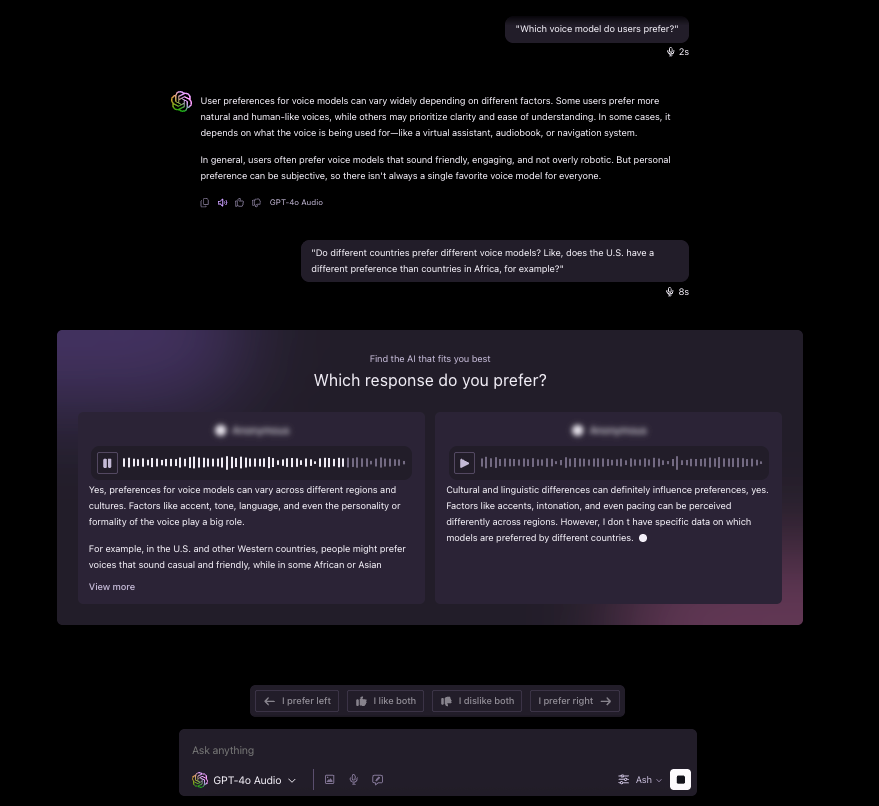

The core idea is simple: while users are talking to a voice model on ChatLab, we occasionally play a second model's answer to the same prompt and they pick which one they prefer. This occurs across two evaluation modes:

- Dictate (speech in, text out): A user speaks a prompt and sees two anonymized text responses side by side.

- Speech-to-Speech: A user speaks and hears two voice responses.

Comparisons are served on fewer than 5% of all voice prompts, within conversations users are already having for their daily use cases. They aren't evaluation-specific inputs — they're real questions, real tasks, real accents, and real background noise. And unlike text benchmarks, most of these prompts don't have verifiable answers — 81% are conversational or open-ended. You can't score that with automated metrics. You need human preference.

A few design choices make these comparisons trustworthy. In Speech-to-Speech, users could recognize their current model by its voice, so voices are swapped during battles, using a different voice from the same model's catalog. Voices are also gender-matched across both responses, so preference isn't biased by gender. Both responses begin streaming at the same time, preventing speed from biasing preference.

For the full methodology, including sampling, ranking, and style controls, see our technical report.

What We Found

Across thousands of blind comparisons, a few clear findings stand out.

1. Multilingual performance is the biggest differentiator

User preference varies dramatically by language. In Dictate, Gemini 3 Pro and Gemini 3 Flash are tied at #1, with their advantage against other models widening on non-English prompts. In speech-to-speech, the winner depends on language; GPT-4o Audio leads in Arabic and Turkish, Gemini 2.5 Flash Audio is strongest in French, and Grok Voice is competitive in Japanese and Portuguese.

At the lower end, some models don’t just perform worse outside English, they default to English entirely. GPT Realtime models respond in English to non-English prompts roughly 20% of the time, even on officially supported, high-resource languages like Hindi, Spanish, and Turkish. As one user put it: "Model answered in English although I clearly said in Portuguese that I want to practice Portuguese."

Existing voice benchmarks largely miss this because they rely on synthetic speech and are rarely multilingual, so language robustness under real acoustic conditions never gets tested.

2. Models fail for different reasons

After every Speech-to-Speech comparison, users tag why they preferred one response across three axes: audio understanding, content quality, and speech output.

Some models understand you and give good answers, but their voice delivery falls short. Others struggle to comprehend what you said in the first place. And some show no dominant failure category at all, indicating more balanced capability.

3. Voice selection changes rankings within the same model

The top-performing voices in each model tend to lose on audio understanding (background noise, code-switching, accents) and content completeness, rather than speech quality. In other words, once a voice clears the bar on sounding good, what separates winners from losers is whether the model heard you correctly and gave a complete answer.

But speech quality still matters at the voice level. Users show strong preferences for certain voices over others within the same model; for one model, the best voice wins 30 percentage points more often than the worst. Beyond being a presentation layer, voice directly shapes how users evaluate the interaction.

4. Performance shifts with prompt length and conversation depth

On short prompts (under 10 seconds), the primary failure modes are audio understanding and speech output. On long prompts (over 40 seconds), the pattern inverts: content quality becomes the dominant failure. Shorter audio gives models less signal to comprehend the request; longer prompts are understood but harder to answer well.

Conversational depth tells a different story. Most models perform best on their first turn and decline over extended conversations, but some show the opposite pattern, improving as they accumulate context. The type of failure shifts too: early on, models lose on audio understanding; in longer conversations, the bottleneck moves to content quality. These dynamics only surface in real, multi-turn conversations — exactly the kind of signal that static benchmarks miss.

What’s Next

These findings represent what we can learn from turn-based voice evaluation — but real voice conversations aren't turn-based. People interrupt, talk over each other, and change their minds mid-sentence. Evaluating this kind of full-duplex interaction is coming to Showdown next. Existing benchmarks in this space rely on scripted scenarios and automated metrics; none capture these dynamics through human preference.

Voice AI is the fastest-moving frontier in AI right now, and evaluation needs to move with it. We believe the right approach is preference-based, in-the-wild, and multilingual by default — built on how people actually use voice models, not how researchers design test sets. Voice Showdown is our first step toward that standard.

The leaderboard is live at scale.com/showdown. Get on the early access waitlist for ChatLab to test out models yourself. We'd love to hear what you find!

Ready to break through your data bottleneck?

Scale's team will match your project to the right experts, fast.