AI systems can generate answers, but what they still lack is the judgment that defines how each organization makes decisions. That lack is what makes enterprise AI hard to deploy reliably.

Every organization operates with its own internal logic: how decisions get made, which exceptions matter, what “good” looks like in practice. Dialect is a self-improving intelligence layer that captures how your experts decide and turns that reasoning into systems that get smarter over time.

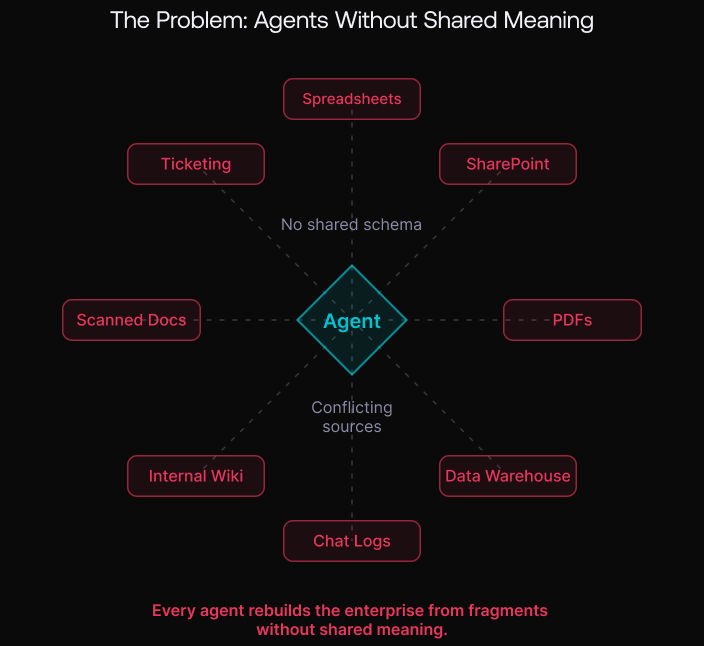

This is where things break down in most enterprises: information is fragmented across systems, formats, and contexts. These systems were built for human use, not for AI. As a result, every agent is forced to reconstruct the enterprise from fragments, stitching together partial context in ways that are brittle and unreliable.

The AI-native data layer, the first pillar of Dialect, addresses this precursor problem. It turns the enterprise into something an AI system can consistently understand and reason over, creating the environment in which a Dialect can emerge.

Why Enterprise Agents Fail

If you’ve spoken with enterprise teams trying to deploy agents, you’ve heard some version of the same thing:

- “We have data everywhere, but agents can’t use it.”

The data exists across spreadsheets, PDFs, SharePoint, internal wikis, ticketing systems, data warehouses, and chat logs, but it’s not accessible to AI in a way that supports reasoning. - “We tried RAG and it works… until it doesn’t.”

Retrieval helps for simple questions, but breaks under the weight of real workflows: multi-step decisions, edge cases, conflicting sources. What matters is buried in tables, charts, scanned documents, or historical logs. - “Our docs are inconsistent, our metrics don’t match, and AI hallucinates policies.”

Enterprises rarely have one canonical definition of a metric, process, or policy. When systems aren’t harmonized, AI stitches together partial truths and fails in the exact moments that matter. - “We spend more time building pipelines than building capabilities.”

Teams end up doing manual work just to make data usable to AI: cleaning, mapping, stitching, building prompt scaffolding, rewriting documentation, maintaining indexes, and repeating the process for each new use case.

Across all of these failure modes, the underlying issue is the same: agents are operating without a consistent view of how enterprise data connects or what it means. Without that, every system is forced to reconstruct the organization from fragments. Enterprise AI efforts stall before the hard part even begins.

From Fragments to Agent-Readable Data

An AI-native data layer transforms the enterprise from a set of disconnected systems into a coherent environment that agents can understand and operate within. It unifies information across:

- Structured sources (databases, warehouses, operational systems)

- Unstructured sources (docs, PDFs, wikis, tickets)

- Multimodal sources (tables, charts, scans, images)

But the objective is more than aggregating data: it’s to preserve meaning. That means capturing shared definitions, relationships between entities, constraints, and the context that informs how information is used. This requires a semantic layer that maintains structure, metadata, and provenance, so agents can reason consistently across systems rather than interpreting each source in isolation.

The result is an agent-ready representation of the enterprise, one that preserves structure, hierarchy, relationships, and traceability, allowing agents to operate across the organization connected as a whole, rather than a collection of loosely related documents.

Agents move from stitching together partial context to operating within a stable environment. Workflows that once depended on brittle retrieval and manual pipelines become more consistent, and teams spend less time maintaining infrastructure and more time building capabilities.

Domain Example: Pharmaceutical Data Layer

In the pharmaceutical world, data spans the full drug lifecycle, from discovery and development to manufacturing, regulatory submission, and commercialization. But it is fragmented across systems, domains, and geographies: research databases, clinical repositories, operational logs, real-world evidence, and dense scientific documentation.

Pharma is also a stress test for enterprise AI because critical evidence is deeply multimodal: clinical study reports, protocols, PDFs, figures, tables, scans, and historical logs. Flattening this into plain text loses the structure and relationships scientific reasoning depends on.

An AI-native pharma data layer solves this by building two foundational capabilities:

A Data Fabric Layer that connects structured and unstructured sources through APIs, pipelines, and federated connectors. This enables access to distributed data while preserving relationships and hierarchy across entities like compounds, trials, and patients, supporting context-aware reasoning and regulatory traceability.

An Intelligence Layer (Semantic + Metadata) that enriches this foundation with biomedical ontologies and metadata harmonizing terminology, aligning definitions, and capturing provenance, lineage, and context. By linking entities through semantic representations, it enables consistent reasoning across domains rather than isolated document lookups.

Instead of indexing information, the goal is to make it usable for AI agents to digest. Together, these layers create coherent, agent-readable representations of the enterprise that preserves structure, relationships, and context across the full lifecycle.

Building Blocks of the AI-Native Data Plane

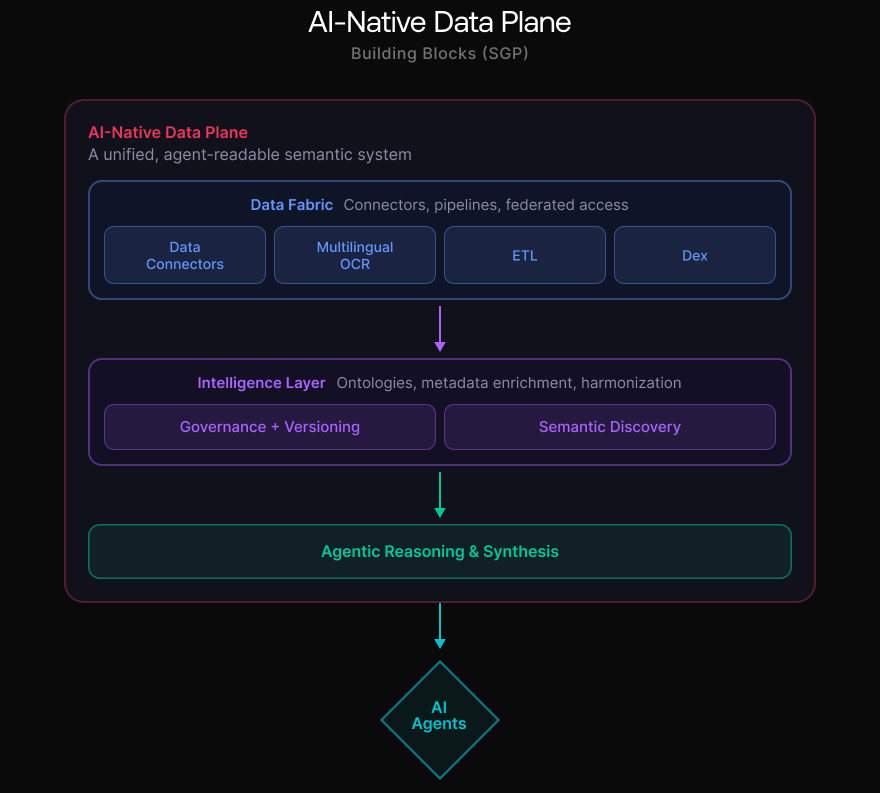

An AI-native data layer is more than a single feature. It’s an integrated system designed to transform fragmented enterprise information into connected semantic assets that agents can reliably use in production.

SGP (Scale GenAI Platform) provides the core building blocks of this AI-native data plane. For example, a clinical report stored as a scanned PDF can be extracted, structured, and linked to patient records and trial data so it becomes part of a system agents can reason over, rather than a standalone document.

Data Connectors (structured + unstructured): Unify the sources where enterprise truth actually lives across operational systems, databases, and knowledge repositories without forcing teams to manually re-platform everything up front.

Multilingual OCR: Unlock scanned documents such as clinical reports or regulatory filings alongside PDFs, images, and global corpora, turning hard-to-access content into usable signals.

ETL: Transform human-centric formats into machine-readable assets optimized for reasoning, while preserving semantic fidelity, structure, and provenance.

Dex (data interaction + transformation layer): Provide a layer for organizing, shaping, and interacting with enterprise data in an agent-ready way so agents can operate consistently as workflows scale across teams and use cases.

Data plane management (governance + control): SGP treats enterprise AI data as an evolving system: governed, monitored, versioned, and continuously improved over time. This prevents each new agent deployment from becoming a one-off pipeline rebuild and creates a stable foundation teams can trust.

Deep research for enterprise (reasoning + synthesis): On top of this foundation, SGP enables agentic research workflows that synthesize across internal sources with full traceability.

Semantic discovery at scale (reusable enterprise meaning): Because all sources are connected through the same semantic layer, discovery becomes reusable across use cases. SGP provides a unified semantic foundation that compounds over time so every new agent starts with more context, not from scratch.

On this foundation, SGP adds capabilities that govern, extend, and compound the system over time. Together, these capabilities form a shared, managed data foundation that every agent and application can rely on.

Clinical Records to Structured Intelligence

In healthcare, clinicians make high-stakes decisions under extreme time pressure, but critical patient context is often trapped in long, inconsistent PDF records. Intake forms, radiology and imaging reports, and historical documentation frequently arrive as scanned files, tables, and mixed-format documents that weren’t designed to be machine-readable.

One leading healthcare organization partnered with Scale to modernize this workflow through RecordTime, a generative AI application that automatically extracts clinically relevant information from patient records and presents it in a form clinicians can review quickly and confidently.

The impact is straightforward: instead of manually reading through large PDFs, physicians, nurses, and allied health staff can start with a structured summary of what matters, reducing administrative burden and accelerating clinical intake.

RecordTime succeeded because it didn’t treat clinical intake as a generic “document parsing” problem. It focused on transforming multimodal clinical records into trusted, reliable data that fits real workflows. That reliability translated into measurable outcomes:

- Improved record review efficiency, reducing time spent per patient record

- Higher clinician satisfaction, as outputs became more consistent and usable

- Growing adoption, with increasing monthly active users and more patient records processed through the system

Most importantly, the organization moved beyond one-off automation to a scalable foundation: a workflow where complex, multimodal documentation could be converted into structured intelligence enabling teams to spend less time searching for information and more time working with it.

Agents Need Understandable Environments

Most enterprise AI programs fail for a simple reason: they try to deploy agents into an environment the agents can’t understand. An AI-native data layer solves the precursor problem. It transforms human-centric silos into connected structured systems, so AI systems can consistently understand and reason over enterprise data.

This is the first pillar of Dialect: a unified, AI-native data foundation powered by SGP that makes enterprise knowledge usable in production.

Next in the series: once AI can read your organization, how does it learn how your organization decides? Next, we’ll explore how the Dialect becomes a living system that captures tribal knowledge and compounds over time.

Ready to break through your data bottleneck?

Scale's team will match your project to the right experts, fast.