Most enterprise AI deployments produce outputs. The problem is that these outputs rarely reflect the level of judgment that differentiates a strong enterprise from an exceptional one. What sets leading enterprises apart is how decisions are made: the embedded workflows, internal logic, risk posture, handling of edge cases, and the nuanced judgment calls, akin to the difference between a junior summary and senior-level analysis. In most organizations, this context isn't captured in any single system. It lives in people's heads, scattered practices across teams, and the invisible "how we do things here" rules that experienced operators internalize over years.

The first pillar of Dialect, the AI-native data layer, gave agents an environment they could understand. The second pillar, a living Dialect of tribal knowledge, makes the shift from modeling data to modeling expertise, teaching agents how the enterprise actually decides. It's the "Learning" node in the enterprise intelligence loop of Data → Learning → Oversight, a continual learning system that turns expert decisions into a reusable enterprise asset, so your AI compounds in capability with usage.

The Problem: AI Outputs Aren’t Enterprise-Grade

AI outputs are reasonable, but they just aren't enterprise-grade. If you’ve spent time with enterprise teams rolling out copilots or agents, you’ll hear the same frustrations that are all variations of one underlying problem: AI can't match the judgment of your best people.

- “Our best workflows live in people’s heads.”The highest-leverage processes aren't fully documented. The edge cases, heuristics, and judgment calls that make teams fast and effective are learned through experience, and they rarely get captured in a system.

- “AI gives answers that are reasonable, but not enterprise-grade.”It understands the general shape of the task, but misses what creates differentiation in your environment: policy nuance, internal standards, and domain-specific constraints. It also lacks expert judgment—the ability to weigh trade-offs under ambiguity and anticipate edge cases—and does not capture institutional knowledge or the practical experience required to execute reliably in real-world settings.

- “Every team reinvents prompts and rules.”One group builds a prompt library. Another builds a separate agent. Everyone rebuilds the same scaffolding because the learning isn’t standardized, shared, or reusable across the organization.

- “We can’t get systems to improve with usage.”AI gets deployed as a static tool. It doesn’t accumulate institutional learning, and it rarely gets meaningfully better after the first rollout.

When AI can’t internalize how the business thinks, every deployment becomes fragile. Teams get stuck in an endless loop of patching prompts, chasing edge cases, and relying on humans to provide the judgment the system can’t yet learn.

What Dialect Does

The Dialect is a living system that learns how you decide. It treats enterprise expertise as the most valuable asset in the organization and turns it into a usable structure from which AI agents can apply, execute against, and improve over time. That structure takes concrete form: decision traces that record how experts reason through problems, playbooks that codify recurring judgment patterns, decision criteria and comments that define what "good" looks like in specific contexts, escalation rules that mark where human judgment is still required, and sector-level patterns that capture what's learned across engagements. Traces are the raw material; the rest are distilled from them or encoded alongside them as the enterprise's standards.

As experts make decisions, adjust workflows, provide feedback and validate outputs, the Dialect captures that signal through traces: the structured records of the steps an agent took to reach a decision, alongside any human edits or corrections along the way. These traces are the raw material of enterprise learning.

Expert feedback comes in two forms. Explicit signals like approvals, ratings, and written corrections are easy to capture but rare. Most feedback is implicit, showing up in the work itself:

- what users edit or override

- which recommendations they accept

- what they ignore

- which documents they keep open when deciding

- what changes between a draft and the final deliverable

- which edge cases trigger escalations

These implicit signals are the real signal of "how the business decides." The Dialect captures both explicit and implicit feedback and moves them through a consistent pipeline: traces record what happened, signals extract the patterns that matter, feedback structures those signals for reuse, and learning applies that feedback to improve the system.

Layers of Enterprise Learning

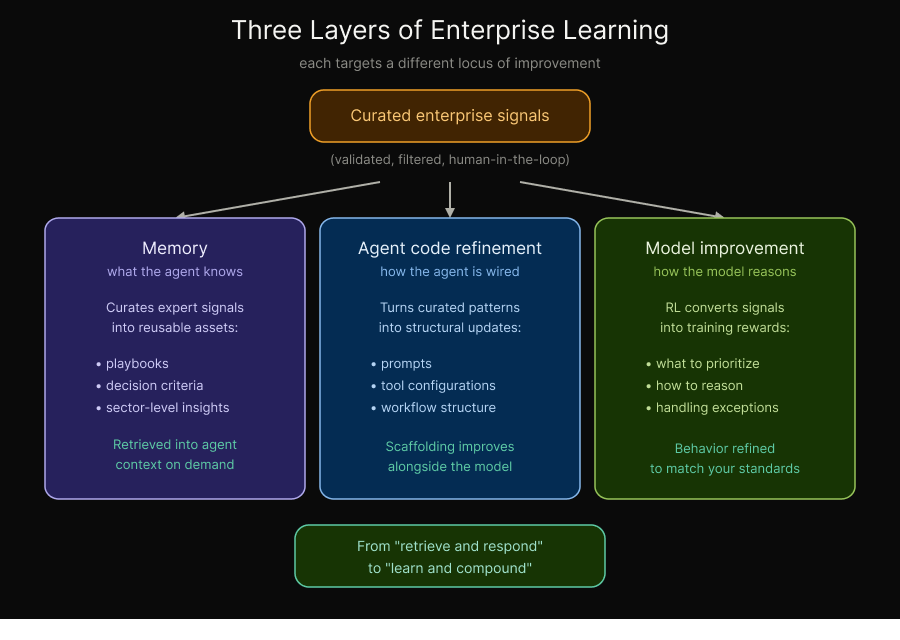

This learning happens across three complementary layers for enterprise continual learning, each targeting a different locus of improvement: what the agent knows, how the agent is wired, and how the model reasons.

Memory compounds what the agent knows. The Dialect curates patterns from expert edits, approvals, overrides, and trace outcomes into reusable assets that get retrieved into the agent's context at the moment they matter.

Agent code refinement improves how the agent is wired. When reviewers consistently restructure an output, reorder steps, or correct how a tool is invoked, the signal points to the agent itself, not the model. Scale's research on agent optimization, including VeRO (Versioning, Rewards, and Observations), turns those patterns into automatic updates to prompts, tool configurations, and workflow structure. This closes the gap between "the model got it wrong" and "the agent was wired wrong," which in practice is where a large share of production issues actually live.

Model improvement targets how the model reasons. Scale's agent reinforcement learning platform converts curated enterprise signals into verifiable reward functions, allowing agents to be refined over time to match your standards.

Together, these layers move the enterprise from "retrieve and respond" to "learn and compound."

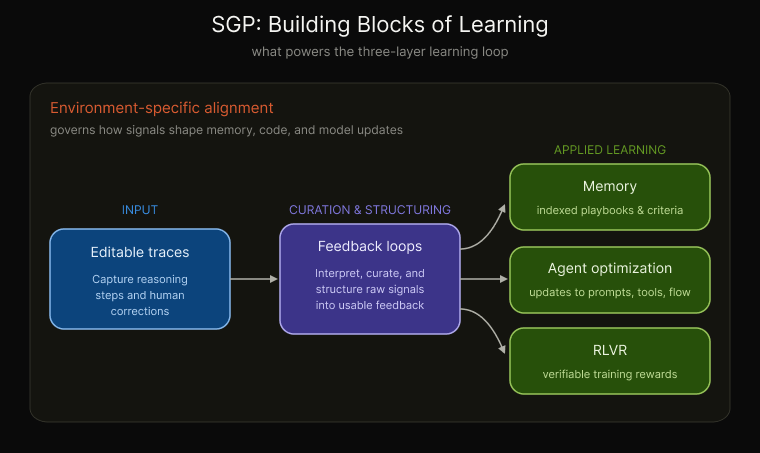

Inside SGP

SGP provides the building blocks that make this learning loop possible. Traces capture what happened. Feedback loops curate and structure those signals into usable feedback. That feedback then drives the three applied learning outputs all within the guardrails of environment-specific alignment: memory, agent optimization, and RLVR.

Dialect in Practice

In professional services and due diligence, the value of the firm is its judgment, and its edge is the accumulated experience of thousands of past engagements. But that experience rarely becomes a reusable system. It’s distributed across deliverables, workplans, information request lists, and the lived habits of practitioners. Junior staff learn by shadowing, and quality often depends on a small number of “senior experts” who know what matters, where to look, and how to structure the final output.

In one ongoing engagement, the goal is to turn that institutional expertise into a continual learning system, so every engagement strengthens the next. Rather than treating due diligence as a static document-generation workflow, the system is designed to capture expert judgment from real execution and organize it into reusable, sector-level patterns and playbooks that can be retrieved in-context as analysts work. This is the shift in progress: moving tribal knowledge out of individual heads and into an enterprise learning system that compounds over time.

Decisions Compound Enterprise Learning

Enterprises don't win with AI by deploying a smarter model. They win by building a system that learns how the enterprise decides. The Dialect makes that possible. It transforms expert judgment into a living, compounding asset, captured through traces and feedback, expressed through memory, agent code refinement, and model improvement, and reinforced through continual evaluation and alignment.

Next in the series: how do you know your AI is operating correctly inside real workflows? We'll explore the final pillar: reliable enterprise-specific evaluations, and why they're the foundation for trustworthy AI outcomes.

Ready to break through your data bottleneck?

Scale's team will match your project to the right experts, fast.